Services

Services

Common Model

Common Model

Industries

Industries

Resources

Resources

Success Stories

About us

About us

About us

Contact Us

As AI transitions from passive tools to autonomous actors, organizations must embed accountability and decision boundaries directly into system operations to ensure reliability.

Over the past year, AI has quietly shifted roles. Many systems are no longer just analyzing data or supporting decisions—they’re beginning to take small actions within workflows, connect systems, and operate with a degree of autonomy.

This doesn’t change everything overnight. But it does change how organizations think about trust, oversight, and responsibility. So for our first newsletter of the year, we want to explore a simple idea: as AI moves from tools to actors, governance needs to evolve with it.

As AI systems evolve into agents, traditional software governance starts to feel incomplete.

These systems don’t just follow instructions—they interpret goals, evaluate options, and act across systems with limited supervision. Once an AI system is granted agency, the key question is no longer whether it follows rules, but how accountability is maintained when decisions are made autonomously.

In practice, AI is becoming an operational participant inside the enterprise, not just a technical component.

In agent-based systems, trust is rarely binary.

An agent may be trusted to act independently within a narrow scope, while requiring oversight for higher-impact or higher-uncertainty decisions. Autonomy may be limited by workflow, time window, or data quality.

This mirrors how authority works in human organizations. Trust is contextual, bounded, and continuously reassessed. As AI systems take on more responsibility, they need the same kind of treatment.

Policies and guidelines are important—but for AI agents, they’re not enough.

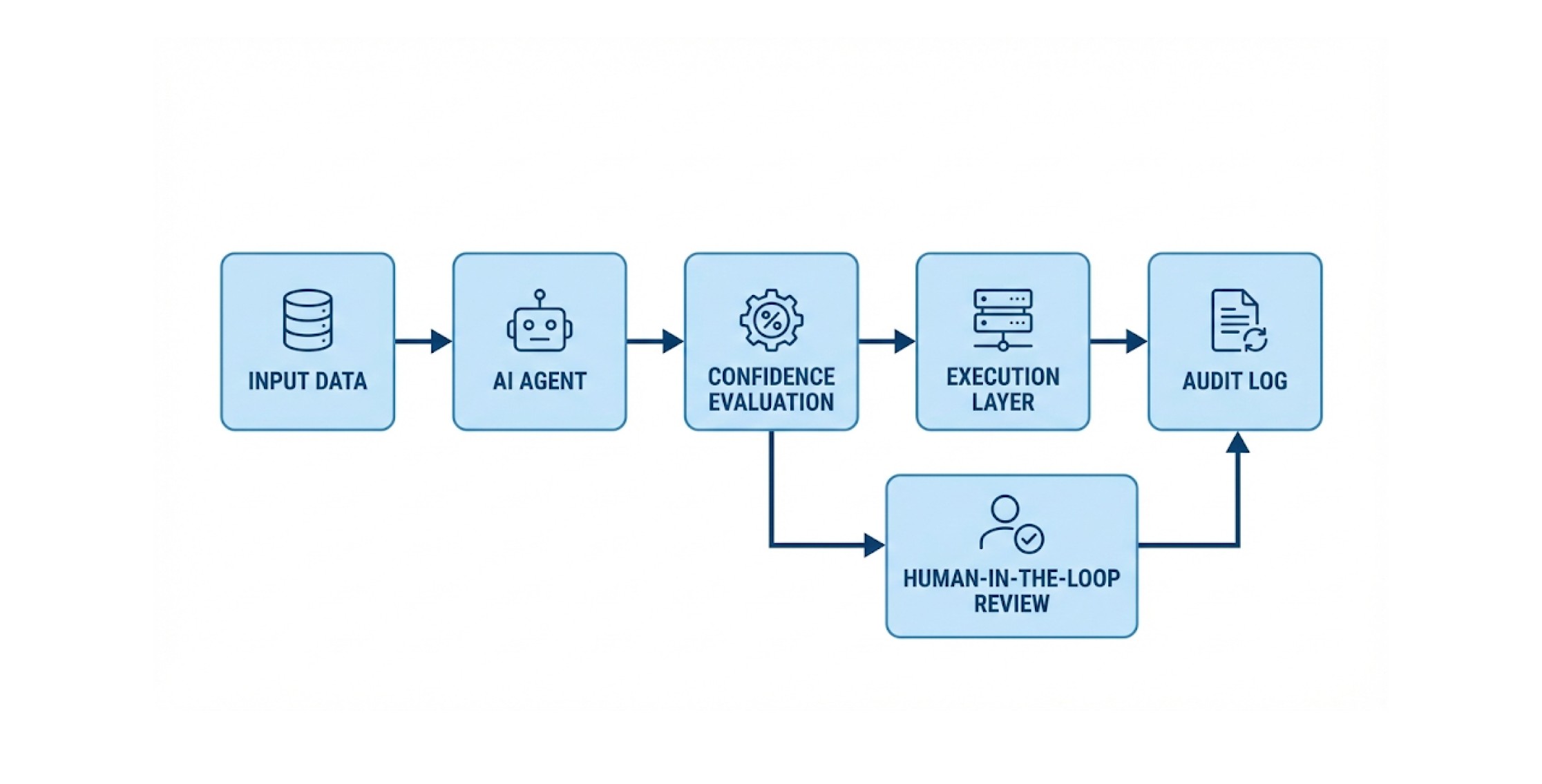

Governance has to be embedded directly into how the system operates. Decision boundaries should be enforced at runtime. Escalation should trigger when confidence drops or ambiguity increases. Actions should remain traceable and explainable as part of normal operation.

Governance, in this sense, isn’t something added later. It’s a design choice.

One example of governance-aware, workflow-style agentic AI in production is the BKOAI Contextualization Agent for Equipment Mapping.

Large industrial facilities often contain tens of thousands of raw sensor and engineering tags—spread across SCADA systems, PI servers, maintenance databases, and engineering documents. Mapping these tags into a correct equipment hierarchy in a single LLM pass quickly leads to context overload and error propagation.

Instead, BKOAI uses a structured workflow agent to incrementally contextualize equipment data, producing a continuously evolving equipment knowledge graph that unifies PI tags, documentation, and engineering hierarchies. This approach favors controlled execution, traceability, and repeatability over unrestricted autonomy—making it better suited for high-precision industrial environments.

Read more: https://www.bkoai.com/use-cases/pi-mapping-intelligence-system

The biggest risks in agentic systems are often quiet ones—systems that slowly drift, optimize the wrong objective, or reinforce flawed assumptions while appearing to work fine.

That’s why trust in AI agents needs to be practical. At any point, teams should be able to understand what an agent did, why it did it, and who allowed it to act that way.

AI agents can deliver real value. But autonomy without governance creates risk, not leverage.

As AI moves from tools to actors, trust is no longer assumed. It’s designed.